What is insight?

- blewis

- Aug 13, 2024

- 3 min read

Updated: Jul 21

Here is a lovely definition of insight by the Cambridge English Dictionary:

(the ability to have) a clear, deep, and sometimes sudden understanding of a

complicated problem

B2B Sales and RevOps present particularly complicated problems: lots of messy data with high-variance outcomes representing chains of complex human interaction (the sales process). Problems consist of inputs and outcomes: given inputs, estimate odds of outcomes. Sales inputs are things like demand generation, deals in pipeline and all their attributes, historic statistics, trends, etc. The outcome of interest is usually sales. What are the odds we'll meet our goal this quarter? This year?

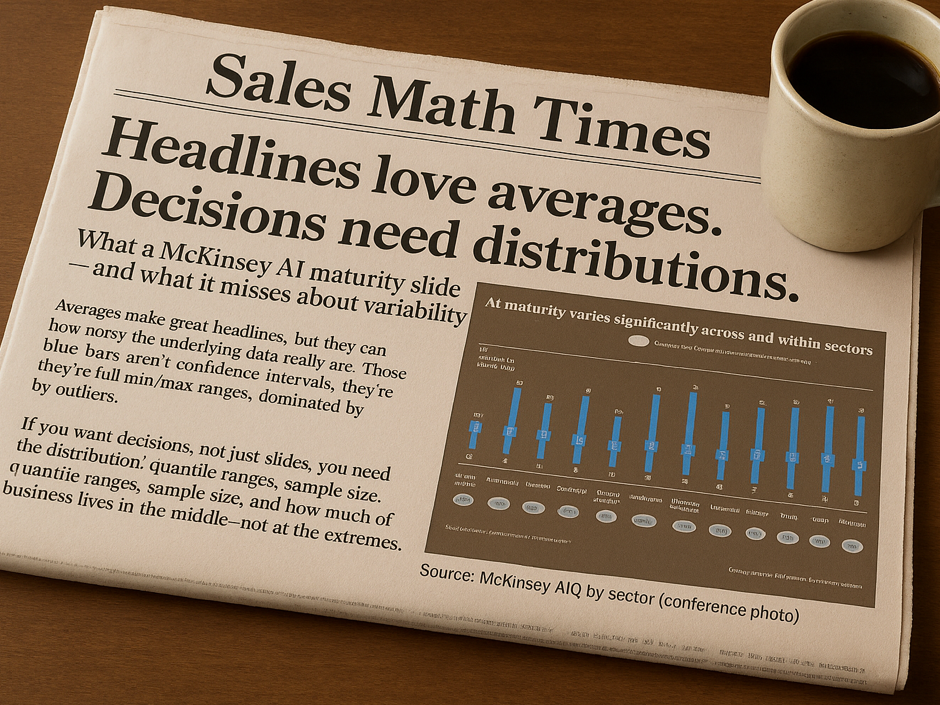

Clear and deep understanding of the Sales problem requires understanding how inputs, and changes in inputs, affect outcomes–at least on average. This can be done! Sometimes a valid understanding might even be to discover no significant effect on outcomes for a particular input. Many of our blogs discuss specific examples for win rates, Monte Carlo forecasting, capacity planning, and others.

Eureka! Data-driven sales insight is possible!

What isn't Insight

The word insight is misused a lot in Sales and RevOps, especially in the context of learning from data. In fact, we are surprised to almost never see true insight in traditional B2B sales wisdom (coverage!) or software (AI!). Most of what we see out there either:

Nicely displays a bunch of summarized data without any connection to outcomes.

Assumes connections between inputs and outcomes without evidence.

Compares data to an outcome (better) but without an understanding of odds.

Such "insights" are typically about as insightful as astrology.

The following illustration distills the kinds of "insights" provided by lots of applications into a representative example. It should look familiar to anyone regularly using RevOps or CRM software. We see a single Opportunity/Deal with a nicely-summarized (but uninformative) bar plot of activity, some kind of deal score, stage, and a bunch of color-coded "insights" that on first glance appear to be reasonable. But are they really?

Consider the first "insight": Close Date pushed back 2 times. The software flags this red. It must be bad! Is it though? Unless you measure the effect of pushing out deals on sales outcomes you can't really say! It's remarkable how nearly universally this is thought to be bad but rarely measured. Maybe it is, maybe not.

It's easy to measure the impact of an input like Close Date pushes on an outcome like winning a deal. The plots below do this using data from three actual, very different, mid-sized companies. Data are filtered to include only new business. Opportunities are classified in two ways: won or not won, and by how many times their close dates were pushed out of the quarter. So, one dot on a graph with an x-value of 3 represents all opportunities with close dates pushed out 3 times. The vertical axis shows the total count won divided by the total number of opportunities in each dot–an estimate of long-term win rate (higher is better!). Finally, the shaded bar represents the 90% quantile of win rate for each dot. Dots with overlapping shaded areas are hard to distinguish statistically.

What do the data say? The first picture is what I gather most people think the effect of pushes look like. But for the other companies it's at best unclear if the number of pushes out of the quarter means it's less likely an opportunity will close! Pushes are indeed bad for some companies, but not so bad or even arguably good for others—the opposite of what the "insight" above indicates!

The other "insights" are similarly un-insightful. Length in stage is at least compared to previous won opportunities but with no idea of whether or not the number is significantly different. The others tell you things you already know or simply summarize data, without any indication of their effect on the outcome.

Presented without context, these are not really insights and might even be wrong. Similar misconceptions are seemingly everywhere in Sales and RevOps: pipeline coverage, chasing hyper-accurate early forecasts, and many others.

Improve your sales efficiency. Try Funnelcast. |

The next time someone proposes "insights" to help run your sales organization, be skeptical. Fortunately it's easy to put any metric or claim of insight on a sound footing. Simply measure its effect on the outcome you care about!

Related posts

Comments